Ian J. Latchmansingh

Human-Centric Design Leader & Technologist in NYCBroadcast Monitoring using Machine Learning and Computer Vision

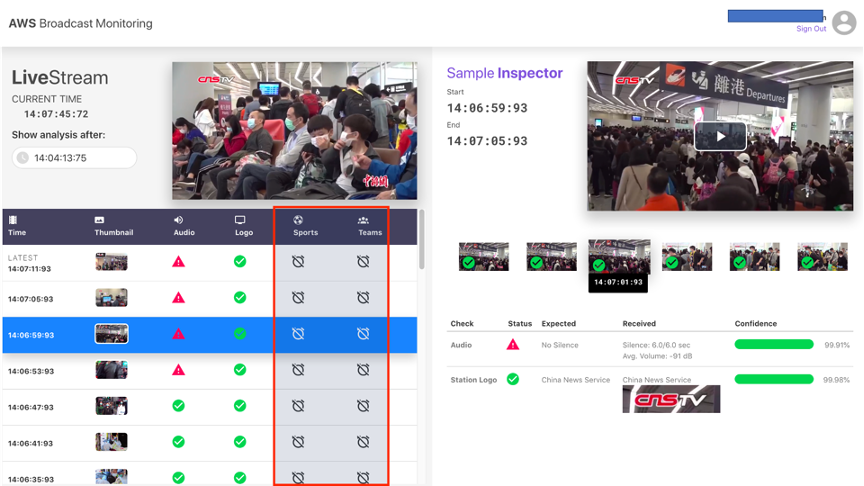

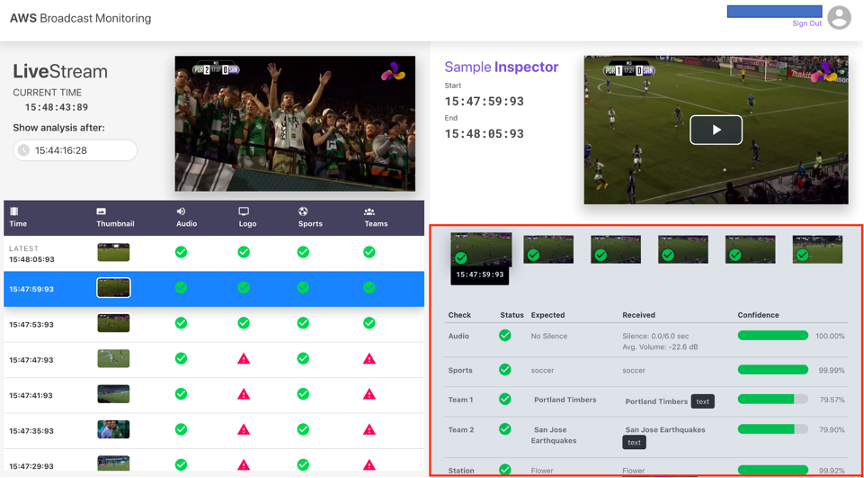

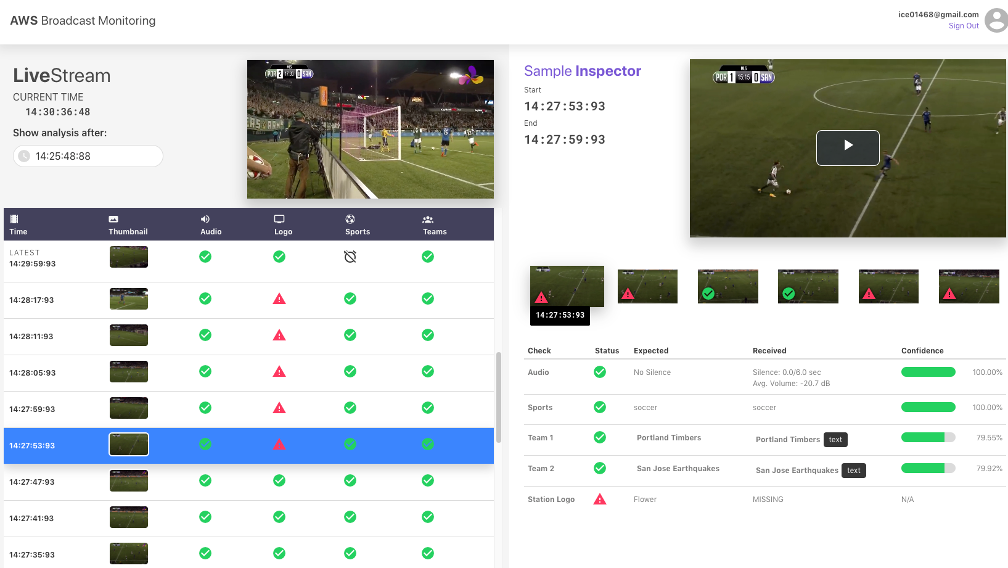

Broadcast monitoring is a service provided to broadcasters and over-the-top (OTT) streamers that provides a significant number of quality checks on a given media source. They can be relatively minor errors like spelling or audio volume, or more critical issues like content errors (broadcasting the wrong media) and incorrect audio (wrong language or content).

Traditionally, the higher-level quality checks are conducted manually by humans who are constantly watching broadcast streams for issues and escalating them to the sources. An operator may be watching anywhere from six to 34 simultaneous streams, which indicates this is non-scalable with the available workforce. As OTT streams in particular increase, it may become essential for quality monitoring services to augment their workforce with machine learning.

This solution, for which I designed and developed the interface, allows for the automation of higher-level monitoring tasks that were previously manual chores. This enables human workers to focus on higher-level tasks, take action sooner, and handle a higher volume of broadcasts without sacrificing efficacy.

This prototype was developed in six weeks alongside engineers Adam Best and Angela Rouhan Wang, who utilized AWS AI services like Amazon Rekognition to analyze the content of an HTTP Live Streaming (HLS) video stream. This is done in near real-time (sub 15 seconds per sample).

©MMXXI

Perfection is achieved, not when there is nothing more to add, but when there is nothing left to take away.